Deepfake-Driven Social Engineering: How AI Voice and Video Are Being Used to Bypass Security Controls

There is a moment in every successful social engineering attack when the target makes a decision to wire funds, share credentials, or grant access. That moment now comes faster than ever, because the voice on the other end of the line sounds exactly like someone you trust.

AI-generated voice and video have fundamentally changed the economics of impersonation fraud. What once required a skilled con artist with months of preparation can now be executed by a moderately technical attacker with a few seconds of audio and a commercially available cloning tool. The result is a new class of social engineering attack that bypasses the one control organizations have always relied on most: human judgment.

The Mechanics Behind the Deception

Deepfake-driven social engineering relies on two core technologies working in tandem: AI voice cloning and AI-generated video synthesis.

For voice cloning, attackers need surprisingly little to get started. As few as 30 seconds of clean audio, scraped from a LinkedIn video, a YouTube interview, or a recorded conference call, is enough for modern AI models to replicate a person’s pitch, cadence, and accent. Tools such as ElevenLabs, Resemble AI, and Microsoft’s VALL-E have made this technically accessible without requiring deep expertise. Once a voice model is trained, it can be deployed in real time, converting an attacker’s speech into the victim’s voice mid-call.

Video deepfakes go a step further. By training generative models on footage of a target, their facial expressions, lip movements, and mannerisms, attackers can produce video that passes a visual inspection with alarming consistency. What was once reserved for nation-state actors with significant resources is now available through commercial tools that cost a fraction of what a single breach might yield.

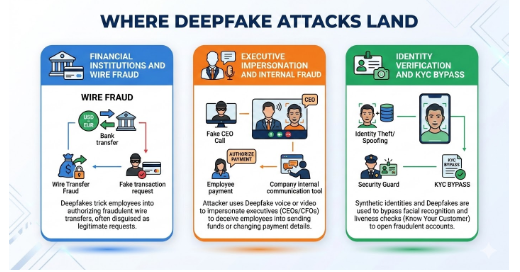

The Targets: Where Deepfake Attacks Land

Financial Institutions and Wire Fraud

Bank call centers have become primary targets. Fraudsters use voice clones to impersonate account holders against IVR systems and human agents, attempting to initiate transfers, reset credentials, or gain account access. The pattern has become frequent enough that voice biometrics, once considered a strong second factor, is now treated by many security teams as a compromised control.

The consequences extend beyond individual accounts. A finance worker at a multinational firm was manipulated into transferring the equivalent of $25 million after joining a video call with what appeared to be the company’s CFO and other colleagues, all of whom were deepfakes.

Executive Impersonation and Internal Fraud

Attackers routinely impersonate C-suite executives to pressure employees into bypassing financial controls or sharing sensitive access. These attacks are effective because they exploit organizational hierarchy; few employees are willing to question or delay a request that appears to come from the CEO.

The software company Retool suffered a significant breach through exactly this vector. Attackers combined SMS phishing with a follow-up voice call impersonating an IT staff member. The call’s believability was the critical factor that pushed the target to complete the compromise, ultimately resulting in tens of millions of dollars in losses for Retool’s clients.

Identity Verification and KYC Bypass

Financial services, cryptocurrency exchanges, and regulated industries rely on Know Your Customer processes to verify identity at onboarding. Deepfake videos and AI-altered identity documents are being used to defeat these checks, allowing fraudsters to open accounts under false identities. The cost of generating a deepfake for this purpose has dropped to under two dollars, while the potential returns run into the hundreds of thousands.

Why Traditional Controls Are Failing

Security programs were not designed for adversaries who can fabricate the sensory evidence that human verification depends on. Several assumptions are now broken:

Voice as a trusted identifier. Speaker verification systems trained on genuine voice patterns are increasingly unable to distinguish between a real voice and a high-quality clone. Liveness detection, the process of confirming that audio comes from a live speaker rather than a recording, is improving but remains exploitable.

Visual confirmation. Video calls were assumed to provide a higher assurance level than phone calls. Deepfake video technology has eroded that assumption. Real-time face-swapping tools can now run on consumer hardware, making video impersonation a viable attack vector.

Out-of-band verification. Organizations often rely on calling back a known number to verify a request. This control holds, but only if employees actually use it, and only if the attacker has not also compromised that channel.

Employee skepticism. Security awareness training teaches people to question suspicious emails. It has not kept pace with attacks where the voice, face, and communication style of a trusted colleague are all convincingly replicated.

Defending Against Deepfake Social Engineering

No single control defeats this class of attack. Defense requires layering technical detection with organizational procedures and, critically, removing the single-human decision point that most of these attacks rely on.

Multi-person authorization for high-value actions. Any wire transfer, credential reset, or access grant above a defined threshold should require independent verification from a second person through a separate, pre-established channel. This control survives deepfakes because it requires the attacker to simultaneously compromise two people.

Code word protocols. Teams that communicate frequently should establish rotating verification phrases, known only internally, that can be used to confirm identity on calls. This is low-tech and highly effective against audio deepfakes.

AI-powered detection at the network edge. Real-time detection tools that analyze audio and video streams for synthetic artifacts are now available. These tools look for inconsistencies in spectral patterns, unnatural prosody, and pixel-level anomalies that are invisible to the human eye but detectable algorithmically. Deploying these controls on the communication infrastructure, rather than relying on end users to spot fakes, is the more reliable approach.

Continuous monitoring and behavioral analytics. Detecting that an unusual request was made, a wire transfer initiated outside normal hours, a credential reset following an executive call, is often the last line of defense. HawkEye AI provides behavioral monitoring that can surface these anomalies in real time, correlating activity across endpoints, identity systems, and communication channels to flag patterns that no individual employee would notice.

Integration across security controls. Deepfake attacks do not exist in isolation. They are typically one layer in a multi-stage attack chain that may also involve phishing, credential theft, or malware. Security tools that operate in silos miss the full picture. HawkEye’s CSOC and XDR platform correlates signals across the entire attack surface, enabling analysts to detect and respond to coordinated campaigns rather than individual alerts.

Conclusion

Technical controls matter, but the organizations that handle these attacks best share a common attribute: they have removed the assumption that identity can be verified in a single interaction.

Security teams should audit every process that relies on voice or video as a trust signal and ask a direct question: What happens if this audio or video is fabricated? Where the answer is “the action proceeds anyway,” that is a process that needs redesign.

Employee training should shift from “how to spot a deepfake” toward “when to apply a second verification step.” The former relies on human perception against increasingly sophisticated AI. The latter is a procedural control that holds regardless of how good the fake becomes.