The Anthropic Code Leak: When a Packaging Error Becomes a Supply Chain Risk

In March 2026, portions of Anthropic’s internal “Claude Code” were exposed publicly through an npm package misconfiguration. The incident was not the result of a sophisticated intrusion or a targeted attack. It originated from a simple oversight in packaging controls. A missing exclusion rule allowed internal source code to be bundled and published unintentionally.

The exposure spread quickly. The repository gained traction across developer communities, drawing attention not because of its size alone, but because of what it represented. A high-profile AI vendor had leaked part of its internal tooling through the same mechanisms used by thousands of software teams every day.

No user data was reported compromised. The real impact lies elsewhere.

How The Exposure Happened

When Anthropic released version 2.1.88 of the @anthropic-ai/claude-code npm package on March 30, 2026, it inadvertently bundled a source map file — cli.js.map — alongside the compiled JavaScript. A source map is a structured JSON file that connects minified or bundled code back to its original readable source. Developers use them for debugging. Security researchers use them for something else entirely.

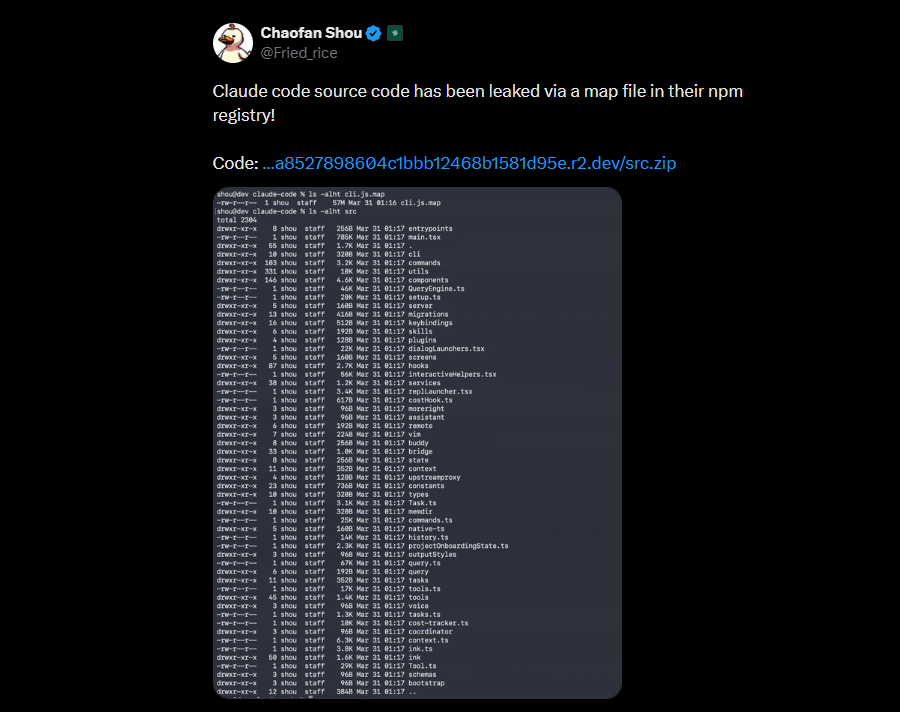

Security researcher Chaofan Shou spotted it almost immediately and posted to X: “Claude code source code has been leaked via a map file in their npm registry!” The post crossed 28 million views within hours. By then, the damage was done, public GitHub mirrors had already reconstructed and republished nearly 2,000 TypeScript files totaling over 512,000 lines of code.

Anthropic confirmed the incident, stating it was “a release packaging issue caused by human error, not a security breach,” and that no customer data or credentials were exposed. The affected package version was pulled from npm, but its mirrors remain publicly accessible.

What Was Inside

The reconstructed codebase gave outsiders something genuinely valuable: a blueprint of how Claude Code works internally.

Researchers combing through the source found several notable components. The tool uses a self-healing memory architecture designed to work around the model’s fixed context window, allowing it to manage long sessions more effectively than the raw API would permit. There is a multi-agent orchestration layer capable of spawning sub-agents to tackle parallel tasks. A bidirectional communication layer connects the CLI to IDE extensions.

Two features drew particular attention. The first is KAIROS, a background agent mode that lets Claude Code run autonomously, fix errors on its own schedule, and send push notifications to users without waiting for a prompt. The second is a “dream” mode, a continuous background reasoning state where Claude develops and iterates on ideas between active sessions.

Perhaps the most provocative discovery was an “Undercover Mode” for open-source contributions. The system prompt instructs Claude Code not to include any Anthropic-internal information in commit messages or pull request descriptions when working in public repositories. Whether this is responsible operational security or something more uncomfortable depends on your perspective.

Researchers also found evidence of anti-distillation controls, mechanisms that inject fake tool definitions into API responses specifically to poison training data if a competitor attempts to scrape Claude Code’s outputs. It is an aggressive defensive posture that, now that it is documented publicly, becomes far easier to study and potentially circumvent.

The Security Implications Are Bigger Than a Source Leak

Anthropic was correct that this was not a breach in the traditional sense. No database was compromised. No user data walked out the door. But treating a source map leak as merely embarrassing misses the real risk surface.

Claude Code is an agentic tool. It reads codebases, executes shell commands, edits files, manages credentials, and integrates with developer environments across terminal, IDE, and browser surfaces. When the implementation details of that kind of tool become readable, attackers do not need a zero-day, they get a study guide.

AI security firm Straiker described it plainly: instead of brute-forcing jailbreaks, attackers can now study how data flows through Claude Code’s context management pipeline and craft payloads designed to persist across long sessions. That is a meaningful capability upgrade for anyone with bad intentions.

This concern is not hypothetical. Claude Code has a documented history of trust-boundary vulnerabilities, CVEs covering IDE origin handling, pre-trust command execution, read-only validation bypasses, and API key exfiltration via malicious repository settings. Each of those CVEs represents a category of implementation logic that is now easier to research with readable source. The source leak did not create those categories; it just reduced the barrier to exploring them.

The Supply Chain Problem Did Not Stop at Source Code

Within hours of the leak becoming public, a second problem emerged. Attackers began registering npm package names that matched internal dependencies visible in the reconstructed source, a classic dependency confusion attack. The packages, published by a user named pacifier136, included names like audio-capture-napi, image-processor-napi, and url-handler-napi. At the time of writing they were empty stubs, but that is by design. Dependency confusion attacks squat on names first and push malicious payloads later, targeting developers who try to compile the leaked code and inadvertently pull attacker-controlled packages.

There is a compounding factor. Users who installed or updated Claude Code via npm on March 31, 2026, between 00:21 and 03:29 UTC may have also pulled a trojanized version of the Axios HTTP client through a separate, coincidental supply chain attack, a remote access trojan piggybacking on the same distribution window. These were separate incidents, but their timing created a compacted exposure window that organizations using npm-distributed Claude Code installations should treat as a priority audit item.

Why this matters beyond a single vendor

Incidents like this are often dismissed as isolated mistakes. That view underestimates their broader significance.

Modern software development relies heavily on package ecosystems. npm, PyPI, and similar repositories act as distribution channels for both internal and external code. When internal components are packaged for convenience, the boundary between private and public artifacts becomes fragile.

This creates a supply chain risk that is difficult to detect through traditional security controls.

A packaging error in one organization can expose intellectual property, internal logic, and system behavior to a global audience within minutes. Once published, the code can be copied, mirrored, and analyzed indefinitely.

The Anthropic incident demonstrates how quickly that exposure can scale.

What Organizations Should Do

The most important first step for any organization using Claude Code is inventory. Anthropic has deprecated npm as an installation path and now recommends native installers. If your developers, CI runners, build systems, or shared development hosts are still using the npm distribution path, that is the immediate question to resolve, not because the leak itself compromised those machines, but because npm-based installations pull from a distribution chain that this incident demonstrated is not adequately audited at release time.

Beyond version and installation hygiene, this incident is a prompt to review how Claude Code is used operationally. The tool’s permission modes range from read-only planning to fully autonomous execution. Organizations that routinely run Claude Code against unfamiliar or third-party repositories with broad permissions enabled should walk that back until trust posture is explicitly reviewed. Anthropic’s own documentation is candid that auto mode uses a classifier that “does not guarantee safety.” Those are the vendor’s words, and they deserve weight.

For teams that build software and publish their own packages, the lesson is architectural. Source maps need their own access model. The correct default is private upload to an observability backend, not bundling them into the artifact that ships to every developer who runs npm install. Scanning your own build outputs, not your source repository, but the actual tarball, for .map files and sourceMappingURL directives before publish is a five-minute check that would have prevented this incident entirely.

Conclusion

This incident is worth studying not because Anthropic made a catastrophic mistake, but because the mistake was ordinary. A missing .npmignore. A debug artifact that made it past the review gate. A release shipped before the distribution chain was fully audited. These are the kinds of failures that happen at every organization moving quickly, and the reason this one mattered more than most is the nature of the tool involved.

Agentic software, tools that read files, execute commands, manage credentials, and act on your behalf, represents a different risk category from passive applications. When that class of software leaks its implementation, the consequences are not just about IP or competitive advantage. They are about whether the guardrails the tool relies on can be studied, probed, and eventually bypassed by people who now have considerably more to work with.

That is the genuine takeaway from the Anthropic incident. Not that one company had a bad week. But that as agentic tools become standard infrastructure in development workflows, the security controls around how those tools are built and shipped need to match the sensitivity of what they do.

HawkEye provides cybersecurity advisory, penetration testing, and secure development lifecycle services. For questions about supply chain security or agentic tool risk assessments, contact our team.